Why the iPad?

When the iPad was announced I decided it was time to take the plunge and develop a game for it. Although the iPhone is a powerful piece of hardware I just find the screen too limiting. Every game leaves you wishing you had transparent fingers. The iPad seems luxurious with it’s 1024x768 A4 sized screen!

Having written many game engines over the years, both commercially and for fun I’ve run out of love for writing game engines. They are large and difficult to debug and I wanted to spend my time writing a game.

Starting Out

I looked for engines that I could buy in and I briefly evaluated SIO2, Oolong and Unity by going through the specs and reading this blog. I don’t really have any great technical insights beyond those in the blog and I plumped initially for SIO2.

Initially I was quite happy with SIO2. The tutorial videos are great and very instructive on both the use of Blender and the SIO2 codebase. After about two weeks I grew weary of the C code that makes up SIO2. It’s hard to move from C++ to C and the code felt very messy (it’s good C code but that doesn’t make it C++).

Facing the unhappy truth that I will needed to assemble my own engine I started to draw up a plan. The good news was that a large part of the work was assembly and plumbing. My intentions were to use Blender as a level editor, Bullet as a collision/physics system,OpenAL as an audio system and CML for maths. That’s a large amount of work imported from credible stable sources.

Week One Rolling My Own

The first thing I needed to write was an exporter for Blender and get the data into XCode and Rendered in OpenGL ES. Blender has a Python API and it covers most of what I needed.

1 2 3 4 | |

For each mesh it’s just a matter of matching faces to materials and generating index, vertex, uv and color arrays for submission to OpenGL.

All in all it took about 4 days to get it all working.

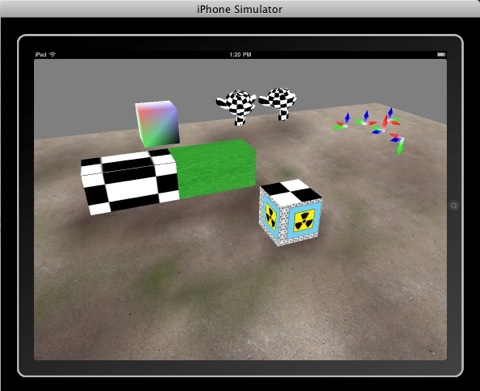

The Red/Green/Blue axis you can see in the top right are tests to see that rotated instances are exported, imported and submitted to OpenGL correctly.

For four days work I was pretty impressed. It was all going really well :) So next thing to get working is transparency. Semi transparent objects need to be rendered AFTER the solid geometry and from back to front in camera space.

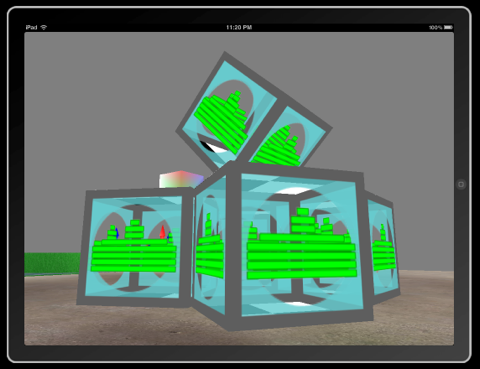

In practice this means that as the geometry for the scene is submitted to be be rendered any material with a transparent texture or alpha value is added to a list. After all the solid geometry has been rendered these ‘deferred’ strips are projected into camera space (their position relative to the camera is worked out), they are sorted from back to front and rendered in that order.

Each of the faces in each box is treated as a separate object to be sorted. Rendering from back to font makes sure that the correct geometry appears through the transparent material.

Next I moved on to collision and pyhsics…. Bullet to the rescue.

Comments